No more LLM model drift

Pragmatum continuously measures and resolves LLM model drift as your underlying data changes to improve hallucination and quality metrics.

How it works

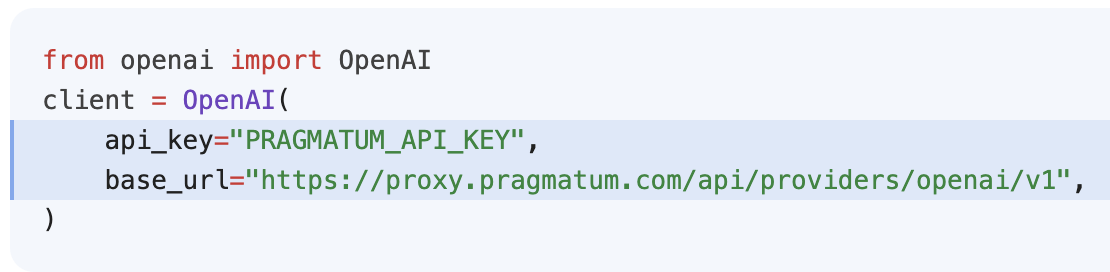

Fast continouous integration

Capture your production data with our one line code proxy (with automatic caching, failover, security).

Upload existing training and eval datasets (JSON, CSV, parquet, HF).

Quality Regression

Using our proprietary signals, determine clusters of prompts that are regressing as your underlying LLM data (e.g., RAG, vector db) changes.

Update your training and eval datasets with missing queries to make them more representative of what your customers are asking.

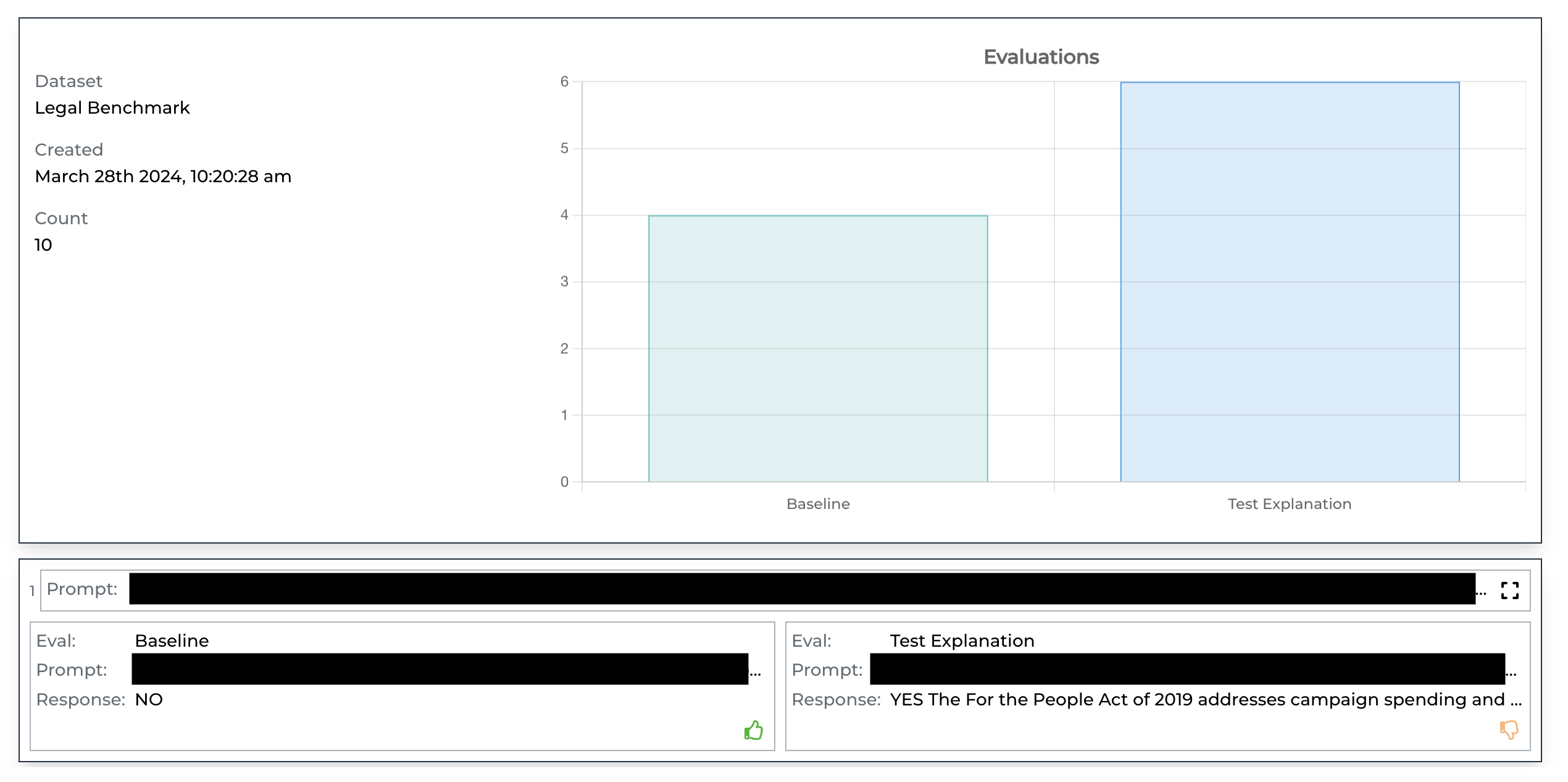

Online Evals

Automate daily evals and quality reporting from a diverse sampling of your data.

With your now up-to-date datasets, run A/B evals with different models and prompts to improve your quality metrics.